|

Both images were taken with similar settings, but if I told you that one of those was taken with a faster shutter speed than the other, you might have assumed it was the one that is darker overall.

The two RAW files are interesting, though. The Bob Rossian trees and Dali windows look fine when you’re looking at the whole image at a normal size, but as you zoom in, the illusion is revealed. The HEIC image gets to take advantage of HDR processing to better balance highlights and shadows, but there’s almost no fidelity left in this area. There’s a lot of fine detail in this area: trees, windows, flagpoles, sandstone, and scaffolding.

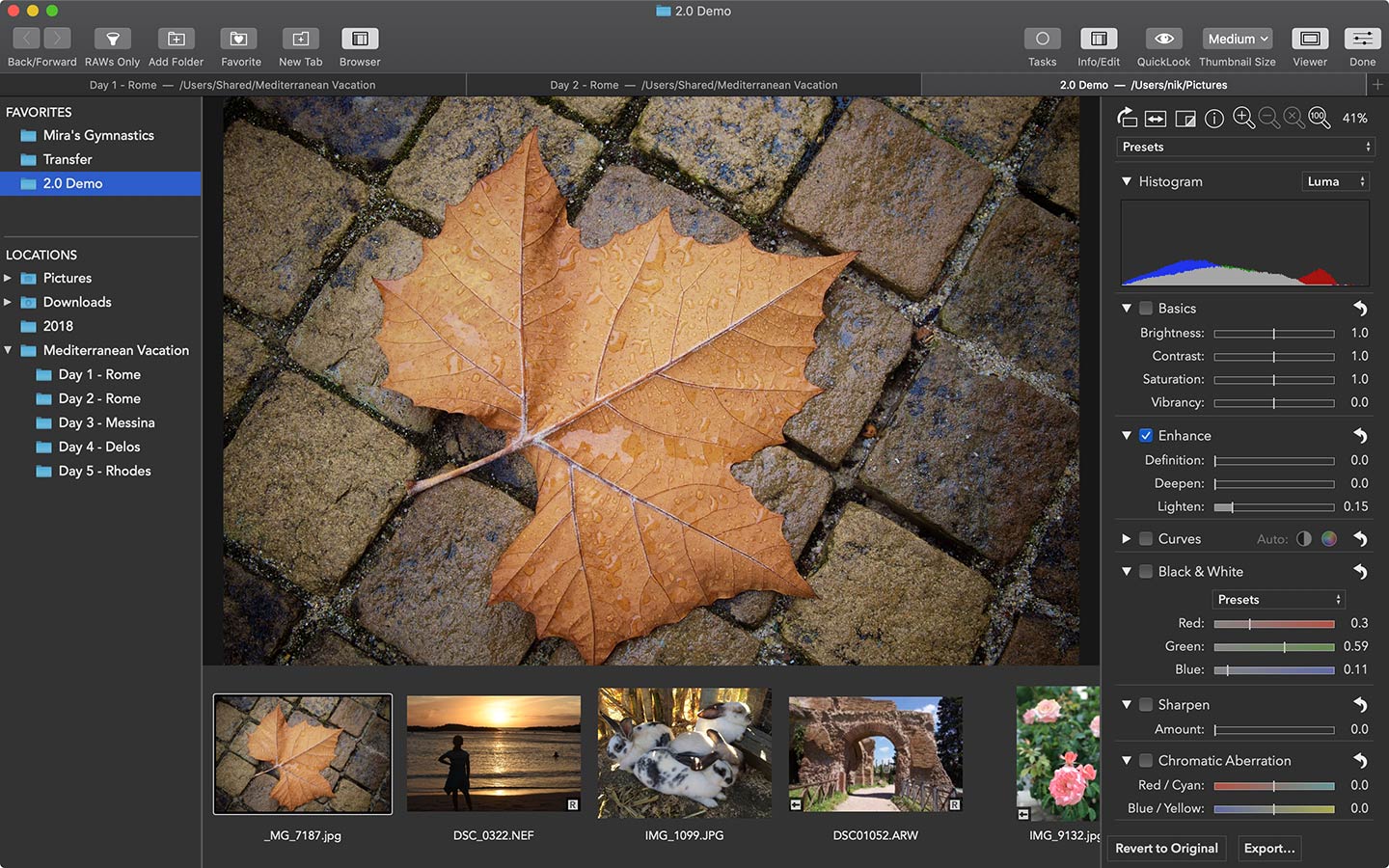

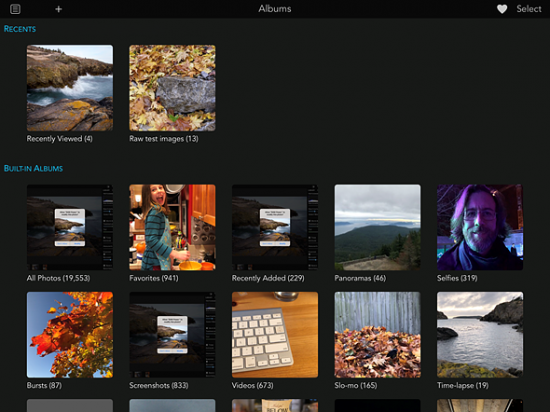

I’m going to start at McDougall Centre on the left-hand side of the image midway down:Ĭamera app, HEIC, 100% cropĬamera app, ProRAW, 100% crop You can already see many differences, but I want to highlight a few specific areas. Let’s start with this picture of Calgary’s hopelessly Soviet west side:Ĭamera app, HEIC Ĭamera app, ProRAW That sentence is pretty vague, so let me show you a few examples. The short version of my findings is that ProRAW bridges the gap between the fidelity and flexibility of a RAW photo and the finished product of a processed image - and whether this is what you want, as a photographer, is going to depend hugely on capture circumstance and what you are hoping to achieve. These are merely some impressions after using and editing ProRAW for about a month. These are also not going to be particularly exciting images. This is not going to be the kind of in-depth guide that the Lux team is able to put together. This is the first time RAW photography has been available in the first-party Camera app, so of course it has been done in a typically Apple way: it’s RAW, encapsulated in the industry-standard Digital Negative file format (DNG), and it has a bunch of little tricks that make it different from unprocessed RAW photographs. So you can get a head start on editing, with noise reduction and multiframe exposure adjustments already in place - and have more time to tweak color and white balance. ProRAW gives you all the standard RAW information, along with the Apple image pipeline data. For an absurd amount of creative control. Here’s how Apple describes it:Īpple ProRAW. The release of iOS 14.3 includes support for something Apple calls “ProRAW”, available on iPhone 12 Pro and 12 Pro Max models. But what if it were possible to combine the two? If you are shooting RAW images you are, by definition, not taking advantage of any of these computational photography advancements. Skies and skin tones are separated to be colour-corrected and have noise reduction applied specific to those typical image elements in poor lighting, multiple images are combined so that noise can be reduced without compromising texture and detail. Today’s iPhone photography is only half about what the camera actually sees the other half is about how that individual image and its contents are interpreted. The numbers above only tell part of the story, though. As a result, I have been able to capture photos with better dynamic range and more detail than I would have thought possible for a phone using third-party apps like Halide.

These are literally microscopic changes, but they have a big impact with the tiny lens and sensor of a smartphone. Over time, the availability of this API has paid off as every new iPhone’s camera gets a little bit better.

It is possible to precisely adjust the white balance, instead of simply making the image more orange or blue, and you can recover shocking amounts of detail in shadows and highlights in a way that simply is not possible with compressed and processed images. Because RAW photos preserve the data straight off the sensor, they also allow for more editing flexibility. Here are the same unedited sample photos I showed in my review of the feature, both shot on an iPhone 6S:Įven on a camera from five years ago, you can see crisp edges on the windows of the building across the street, texture on the roof of the castle-like armoury in the bottom-centre, and more fidelity in the trees. When it first became possible to capture unprocessed iPhone camera data with the API introduced in iOS 10, I remember being shocked by the images I saw.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed